Create Talking Avatars for Music and Creative Videos

Turn any song into a visual experience with AI talking avatars. For musicians, animators, and creative content producers, the challenge has always been creating engaging visual content without the resources for live filming, professional actors, or expensive animation studios. AI-powered talking avatar technology has fundamentally changed this landscape, offering indie artists and creators the ability to produce professional-quality music videos and entertainment content from their home studios.

The Visual Content Gap in Modern Music Production

The music industry has evolved dramatically in the digital age. Streaming platforms and social media have made audio distribution accessible to everyone, but visual content remains a critical differentiator. Artists who can deliver compelling music videos consistently outperform those who rely solely on static images or audio tracks. YouTube, TikTok, and Instagram prioritize video content, and algorithms favor creators who can maintain viewer engagement through dynamic visuals.

Traditional music video production presents significant barriers. Professional shoots require budgets ranging from thousands to hundreds of thousands of dollars. Even low-budget productions demand coordination of crew members, location scouting, equipment rental, and post-production editing. For independent artists working with limited resources, these requirements often make regular video content impossible.

Talking avatar technology eliminates these barriers by enabling creators to generate fully animated, lip-synced characters that perform their music without any physical filming. This democratization of visual content creation represents one of the most significant shifts in creative production since digital audio workstations revolutionized music recording.

Act 1: Using TopMediai and DomoAI for Animated Music Video Creation

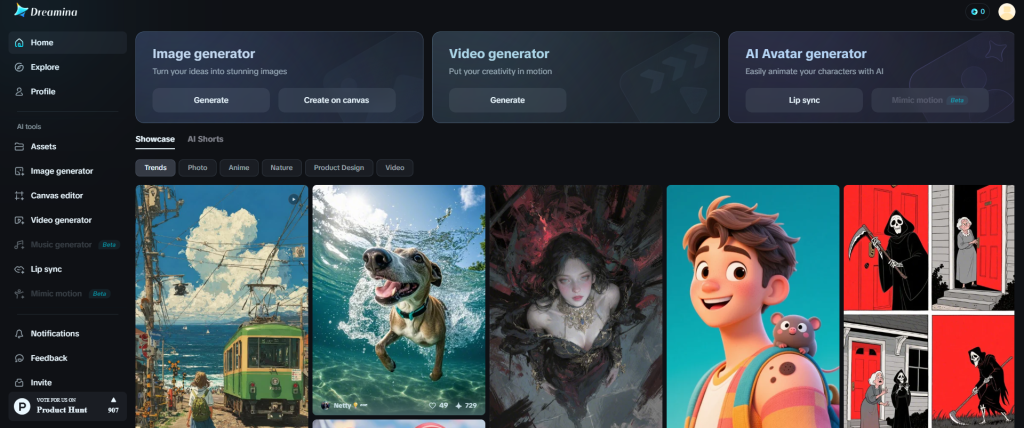

The foundation of AI-powered music video creation begins with understanding the available platforms and their specific capabilities. TopMediai and DomoAI have emerged as leading solutions for creators seeking to transform audio tracks into animated visual experiences.

TopMediai: Accessibility and Versatility

TopMediai offers an intuitive platform specifically designed for creators without technical animation backgrounds. The service provides a comprehensive library of pre-designed avatar templates ranging from photorealistic human characters to stylized artistic representations. This variety allows musicians to select visual aesthetics that align with their musical genre and brand identity.

The platform’s strength lies in its streamlined workflow. Creators begin by uploading their audio track—whether a complete song, vocal recording, or instrumental piece. The AI analyzes the audio file, identifying vocal patterns, rhythm, and tonal variations. This analysis forms the basis for generating synchronized avatar movements.

TopMediai’s template customization features enable artists to modify avatar appearance, including facial features, hair styles, clothing, and accessories. For musicians building consistent brand identities across multiple releases, this customization ensures visual continuity. The platform also supports background customization, allowing creators to place avatars in various environments from abstract digital spaces to realistic settings.

One particularly valuable feature for music creators is the multi-avatar capability. Artists producing songs with multiple vocal parts or conversational elements can generate scenes with several characters interacting. This functionality opens possibilities for visual storytelling that extends beyond simple performance videos into narrative-driven content.

DomoAI: Artistic Transformation and Style Transfer

DomoAI approaches avatar creation from a different angle, emphasizing artistic style transformation. While TopMediai focuses on character animation, DomoAI excels at converting standard video footage or images into specific artistic styles—anime, comic book aesthetics, watercolor effects, and numerous other visual treatments.

For music video creators, DomoAI’s power lies in its ability to transform basic avatar animations into visually distinctive pieces. A creator might generate a standard talking avatar using one platform, then process that result through DomoAI to apply anime styling, creating a final product that stands apart from typical AI-generated content.

The platform’s style library includes dozens of preset aesthetic filters, each calibrated to maintain consistency across frames while applying dramatic visual transformations. This capability is particularly valuable for artists whose musical genres align with specific visual cultures. Electronic music producers might apply cyberpunk or vaporwave aesthetics, while indie folk artists could choose hand-drawn or watercolor styles that complement their sound.

DomoAI also supports batch processing, enabling creators to maintain consistent styling across multiple videos or segments. This feature proves essential for artists producing episodic content, music series, or albums requiring visual cohesion.

Combining Platforms for Maximum Impact

The most sophisticated creators leverage multiple platforms in sequence, using each tool’s strengths to achieve results impossible with any single service. A typical workflow might involve generating the base avatar animation in TopMediai, exporting that result, then importing it into DomoAI for style transformation, before final editing in traditional video software.

This multi-platform approach requires understanding how different AI systems interpret and process visual data. File format compatibility, resolution maintenance, and frame rate consistency become important technical considerations. However, the creative possibilities justify the additional complexity, enabling artists to develop truly unique visual signatures.

Act 2: Syncing Avatar Lip Movements to Vocals and Audio Tracks

The technical heart of effective talking avatar videos lies in precise synchronization between visual mouth movements and audio content. Poor lip-sync immediately registers as artificial and undermines viewer engagement, while accurate synchronization creates believable performances that maintain attention.

Understanding Phoneme Recognition Technology

Modern lip-sync technology relies on phoneme recognition—the identification of individual sound units that comprise speech and singing. AI systems analyze audio tracks and map specific phonemes to corresponding mouth shapes (visemes). For example, the “M” sound requires closed lips, while “Ah” demands an open mouth with visible teeth.

Advanced platforms like TopMediai employ deep learning models trained on thousands of hours of human speech and singing. These models understand not just individual phonemes but the transitional movements between sounds, creating smooth, natural-looking articulation rather than robotic switching between preset mouth positions.

For music creators, this technology presents unique challenges compared to standard speech synchronization. Singing involves sustained notes, rapid articulation in rap or fast verses, harmonic layering, and stylized vocal techniques that differ significantly from conversational speech patterns. The best avatar platforms specifically account for these musical characteristics.

Optimizing Audio Input for Better Synchronization

The quality of lip-sync output depends heavily on audio input characteristics. Creators can significantly improve results by following specific audio preparation practices:

Vocal Clarity: Ensure lead vocals are clearly defined in the mix. Muddy or overly reverberant vocals provide less distinct phoneme information for the AI to process. Consider uploading isolated vocal tracks or mixes with prominent, dry lead vocals.

Format and Quality: Higher quality audio files (WAV or FLAC rather than compressed MP3) contain more data for accurate analysis. While most platforms accept various formats, lossless files consistently produce better synchronization.

Timing and Pacing: Extremely rapid vocal delivery, particularly in genres like speed rap or technical metal, may challenge AI synchronization capabilities. Testing sections of your track helps identify potential problem areas before committing to full video generation.

Silence and Breath Markers: Natural pauses, breaths, and silence periods help AI systems identify phrase boundaries and reset avatar mouth positions to neutral states. Completely continuous vocal lines without breaks may result in less natural-looking animation.

Fine-Tuning and Manual Adjustment

While AI synchronization has advanced dramatically, manual refinement often elevates results from good to exceptional. Most sophisticated avatar platforms provide timeline-based editors allowing creators to adjust specific moments where synchronization appears imperfect.

Key areas requiring potential adjustment include:

Consonant Clusters: Rapid consonant combinations (“str,” “scr,” “spl”) sometimes challenge automated systems, potentially requiring manual timing tweaks.

Sustained Notes: Long held notes in ballads or dramatic moments need appropriate visual representation—maintaining mouth position while incorporating subtle movement that suggests ongoing vocalization.

Vocal Effects: Distorted vocals, heavy processing, or stylized techniques may confuse phoneme recognition. Manual keyframing ensures these sections still display appropriate mouth movement.

Background Vocals: When incorporating multiple vocal layers, decide whether the primary avatar should sync to lead vocals only or somehow represent the harmonic complexity. Some creators generate multiple avatars for different vocal parts.

Advanced Synchronization for Complex Projects

Creators producing sophisticated music videos often work with multi-track audio requiring coordination beyond simple lip-sync. Instrumental passages need appropriate avatar performance—perhaps looking toward instrument positions, reacting to musical dynamics, or transitioning between performance poses.

Some platforms support expression mapping, where audio dynamics influence not just mouth movements but facial expressions, head movements, and body language. Loud, energetic sections might trigger more animated performance, while quiet moments generate subtler movements. This dynamic responsiveness creates more engaging, realistic performances.

Act 3: Creating Anime and Stylized Avatars for Unique Visual Aesthetics

Beyond technical synchronization, visual aesthetic distinguishes memorable music videos from forgettable ones. Anime and stylized avatar approaches offer musicians opportunities to develop distinctive visual identities that resonate with specific audiences and genres.

The Appeal of Anime Aesthetics in Music Culture

Anime-influenced visuals have permeated global music culture, particularly within electronic, hip-hop, and alternative genres. This aesthetic carries specific cultural associations—energy, emotional intensity, fantasy elements, and youth-oriented rebellion—that align with many musical expressions.

For musicians, anime-style avatars offer several advantages:

Genre Alignment: Electronic subgenres, J-pop, K-pop, and various alternative styles have established visual connections with anime aesthetics. Using these styles immediately communicates genre identity to potential listeners.

Emotional Expressiveness: Anime visual language employs exaggerated facial expressions and stylized emotional indicators (sweat drops, background effects, dynamic lines) that effectively communicate feeling beyond realistic representation.

Fantasy and World-Building: Anime aesthetics support fantastical elements—unusual hair colors, dramatic costumes, impossible physics—enabling musicians to create immersive visual worlds around their music.

Cultural Cache: Within many online communities, anime-influenced visuals carry positive associations with creativity, digital nativity, and alternative culture.

Technical Approaches to Anime Avatar Creation

Creating effective anime-style talking avatars requires understanding both the source aesthetic and available technical methods:

Style Transfer Processing: Platforms like DomoAI apply anime aesthetics to existing avatar animations or video footage through neural style transfer. This approach analyzes anime visual characteristics—line weights, color palettes, shading techniques—and applies them to input content while maintaining underlying movement and structure.

Native Anime Generators: Some specialized platforms generate anime characters directly rather than converting existing content. These systems often provide more control over specific anime style elements but may offer less sophisticated animation capabilities.

Hybrid Workflows: Advanced creators might combine multiple approaches—generating base character designs in anime-specific tools, animating through lip-sync platforms, then applying additional style processing for polish.

Beyond Anime: Exploring Alternative Stylization

While anime represents one popular aesthetic direction, countless other stylization options enable musicians to develop unique visual identities:

Comic Book Styles: Bold outlines, halftone shading, and dynamic panel-inspired compositions create superhero or underground comic aesthetics suitable for rock, punk, or hip-hop.

Painterly Approaches: Watercolor, oil painting, or impressionistic effects generate artistic, contemplative visuals appropriate for indie folk, jazz, or classical fusion.

Retro Digital Aesthetics: 8-bit, 16-bit, vaporwave, or early CGI styles appeal to nostalgic audiences and complement electronic, synthwave, or experimental genres.

Abstract and Surreal Treatments: Distorted, glitched, or psychedelic effects push beyond representational avatars into pure visual expression, ideal for experimental, ambient, or avant-garde music.

Rotoscoped Realism: Techniques mimicking traditional rotoscoping create unique semi-realistic aesthetics that bridge animation and live action.

Developing Consistent Visual Identity

For artists building long-term careers, visual consistency across releases strengthens brand recognition and audience connection. When working with stylized avatars, establishing and maintaining specific visual parameters becomes crucial:

Character Design Documentation: Create detailed references for your avatar persona—specific features, color palettes, typical expressions, and characteristic poses. This documentation ensures consistency across multiple videos produced at different times or on different platforms.

Style Guidelines: Define your aesthetic boundaries—which effects, filters, or treatments align with your artistic vision and which don’t. These guidelines prevent visual drift as you explore different tools and techniques.

Evolution Over Time: While consistency matters, allowing gradual visual evolution mirrors natural artistic growth. Plan how your avatar and aesthetic might mature across albums or career phases.

Practical Workflow: From Concept to Finished Music Video

Understanding individual tools and techniques only becomes valuable when integrated into effective production workflows. Here’s a comprehensive process for creating talking avatar music videos:

Pre-Production Planning

1. Concept Development: Define the video’s purpose, target audience, and desired emotional impact. Will this be a performance video, narrative piece, or abstract visual experience?

2. Style Selection: Choose aesthetic direction based on genre, existing brand identity, and target platform requirements (YouTube, Instagram, TikTok each have different optimal formats).

3. Audio Preparation: Finalize your track, create optimized vocal exports, and identify specific sections requiring special treatment (instrumental breaks, vocal layering moments, dynamic changes).

4. Avatar Design: Select or customize your character, ensuring visual elements align with your musical identity and chosen aesthetic.

Production Execution

1. Initial Generation: Upload audio to your chosen platform and generate base avatar animation. Review for major synchronization issues or technical problems.

2. Refinement: Address any lip-sync problems, adjust timing, and refine avatar performance to match musical energy and phrasing.

3. Style Application: If using platforms like DomoAI, process your base animation through style transfer to achieve desired aesthetic treatment.

4. Background and Effects: Add or customize backgrounds, incorporate visual effects that complement musical moments, and ensure overall visual coherence.

5. Multiple Angles: Consider generating several versions with different camera angles or avatar positions, providing variety for final editing.

Post-Production Polish

1. Video Editing: Import avatar animations into traditional video editing software (Premiere, Final Cut, DaVinci Resolve) for sequencing, transitions, and additional effects.

2. Color Grading: Apply color correction and grading to ensure consistent visual tone and enhance aesthetic impact.

3. Additional Graphics: Incorporate lyrics, title cards, or graphic elements that enhance the viewing experience.

4. Audio Finalization: Ensure audio quality remains pristine through the production process, matching your original master.

5. Format Optimization: Export in appropriate formats and specifications for intended platforms, considering vertical video for mobile-first platforms versus horizontal for YouTube.

Platform-Specific Optimization Strategies

Different distribution platforms demand specific approaches to maximize engagement:

YouTube Music Videos

YouTube favors longer content (3-5 minutes) with high retention rates. For talking avatar music videos:

– Open with immediate visual interest—establish your avatar and aesthetic within the first 3 seconds

– Incorporate visual variation throughout to maintain engagement across full song length

– Consider chapter markers for longer tracks

– Optimize thumbnails featuring your avatar in compelling poses

– Include lyric captions for accessibility and extended watch time

Instagram and TikTok Short-Form Content

These platforms prioritize vertical video (9:16 aspect ratio) under 60 seconds:

– Create impactful song snippets featuring the catchiest sections

– Design avatars with bold, easily readable visual characteristics that register on small mobile screens

– Use high-contrast aesthetics that pop against varied background feeds

– Incorporate text overlays that work with platform algorithms

– Generate multiple variations testing different moments from the same song

Streaming Platform Canvas Features

Spotify Canvas, Apple Music animated covers, and similar features accept 8-second vertical loops:

– Extract key visual moments from full videos

– Create seamless loop points so repeated viewing doesn’t feel repetitive

– Ensure avatar and aesthetic remain recognizable at small sizes

– Design for silent viewing since many users browse with audio off until actively selecting tracks

Cost Considerations and Tool Selection

Budget significantly influences tool selection and production approach:

Free and Low-Cost Options

Several platforms offer limited free tiers or low monthly subscriptions ($10-30):

– Basic avatar generation with standard templates

– Limited rendering time or resolution

– Watermarked outputs or restricted commercial usage

– Fewer customization options

These tiers work well for creators testing concepts, building skills, or producing content for non-commercial purposes.

Mid-Tier Professional Tools

Monthly subscriptions ($30-100) typically provide:

– Commercial usage rights

– High-resolution export (1080p or 4K)

– Extended customization capabilities

– Larger asset libraries

– Priority rendering and customer support

Most serious musicians and content creators find this tier offers optimal value for regular production.

Enterprise and Custom Solutions

High-budget creators or those requiring unique capabilities might invest in:

– Custom avatar modeling matching specific visions

– Proprietary style development

– Integration with existing production pipelines

– White-label solutions for client work

These approaches make sense for established artists, production companies, or creators monetizing avatar content significantly.

Avoiding Common Pitfalls

Creators new to talking avatar production frequently encounter specific challenges:

The Uncanny Valley Problem

Photorealistic avatars that almost but don’t quite achieve true realism often create unsettling viewer responses. Solutions include:

– Embracing stylization rather than pursuing pure realism

– Ensuring consistent aesthetic treatment—don’t mix ultra-realistic skin with cartoon eyes

– Testing with objective viewers who’ll provide honest feedback

Over-Reliance on Automation

While AI tools handle heavy lifting, entirely automated workflows rarely produce exceptional results:

– Always review and refine AI-generated synchronization

– Add manual touches that reflect your unique artistic vision

– Combine platform outputs with traditional video editing for polish

Ignoring Narrative and Emotion

Technically perfect lip-sync means nothing without emotional resonance:

– Choose avatar expressions matching lyrical content

– Vary visual presentation across different song sections

– Consider how visual pacing complements musical dynamics

Platform Mismatch

Using horizontal videos on vertical-first platforms or vice versa hampers performance:

– Plan aspect ratio during pre-production

– Consider rendering multiple versions for different platforms

– Understand where your target audience actually consumes content

Future Developments and Emerging Capabilities

The talking avatar space evolves rapidly, with several emerging trends worth monitoring:

Real-Time Performance Avatars

Developing technology enables real-time avatar control through facial tracking, allowing live performances with virtual characters. Musicians could livestream concerts as their avatar personas, combining live musical performance with animated visual presence.

Interactive and Responsive Avatars

Future platforms may enable viewers to influence avatar behavior, creating interactive music videos where audiences affect visual elements through engagement, creating personalized viewing experiences.

Integration with Virtual Worlds

As metaverse platforms mature, talking avatars could perform in virtual venues, creating persistent presences across digital spaces. Musicians might maintain avatar identities across multiple platforms and virtual environments.

Enhanced Emotional Intelligence

AI systems increasingly analyze not just phonemes but emotional content in vocal delivery, automatically adjusting avatar expressions to match sad, angry, joyful, or complex emotional states conveyed through performance.

Democratized Custom Model Training

Simplified machine learning tools may soon enable individual creators to train custom avatar models on their specific aesthetic preferences, generating truly unique visual styles without requiring technical AI expertise.

Building Audience Through Avatar Content

Beyond individual music videos, talking avatars enable broader audience-building strategies:

Character-Driven Content Series

Develop your avatar as a character appearing across various content:

– Behind-the-scenes commentary delivered by your avatar persona

– Reaction videos to industry news or trending topics

– Tutorial content teaching music production or creative skills

– Storytelling content expanding the narrative universe around your music

Cross-Platform Presence

Maintain consistent avatar presence across all channels:

– Profile pictures and channel art featuring your avatar

– Short-form content previewing upcoming releases

– Platform-exclusive content that rewards followers on specific channels

Community Engagement

Use your avatar to facilitate deeper community connection:

– Personalized video responses to fan comments or questions

– Avatar-delivered announcements and updates

– Collaborative content featuring fan avatars alongside yours

Merchandise and Brand Extension

A well-developed avatar becomes valuable intellectual property:

– Stickers, posters, and apparel featuring your character

– NFT collections or digital collectibles

– Licensing opportunities for use in games, apps, or other media

Conclusion: The Creative Future of Musical Visual Expression

Talking avatar technology represents far more than a simple production shortcut. It fundamentally expands creative possibilities for musical artists, enabling visual experimentation and consistent content production previously impossible for independent creators.

The democratization of these tools means visual sophistication no longer correlates directly with budget. An artist working from a bedroom studio can now produce music videos with aesthetic impact rivaling major label productions. This leveling of creative playing fields accelerates the ongoing shift toward authentic artistic vision over financial resources as the primary success determinant.

For musicians and content creators, the strategic question isn’t whether to explore talking avatar technology, but how to leverage it most effectively. Those who master these tools early, developing distinctive visual identities and consistent production workflows, position themselves advantageously as visual content becomes increasingly central to musical success.

The platforms and techniques outlined here provide starting points, not limitations. The most exciting avatar-based content hasn’t been created yet—it awaits artists willing to experiment, push boundaries, and discover novel applications of these emerging tools. Your music deserves compelling visuals. With talking avatar technology, nothing stands between your creative vision and its realization except your willingness to begin.

Frequently Asked Questions

Q: Do I need animation experience to create talking avatar music videos?

A: No animation experience is required. Modern platforms like TopMediai are specifically designed for creators without technical backgrounds. The AI handles all animation automatically—you simply upload your audio and customize visual preferences. While understanding basic video editing helps polish final results, you can create functional talking avatar videos with no prior animation skills.

Q: How long does it take to generate a talking avatar music video?

A: Generation time varies by platform and video length, but typically ranges from 5-30 minutes for a complete song. Simple avatar animations with standard templates process faster, while complex stylized treatments or high-resolution exports take longer. Many platforms queue projects, so actual waiting time depends on server load. Most creators can complete an entire project from concept to finished video within 2-4 hours, including preparation, generation, and post-production editing.

Q: Can I use AI-generated avatar videos for commercial purposes and monetization?

A: Commercial usage rights depend on your specific platform subscription. Free tiers often restrict commercial use or add watermarks, while paid subscriptions typically grant full commercial rights including YouTube monetization, streaming platform usage, and promotional content. Always review the terms of service for your chosen platform. Most mid-tier subscriptions ($30-100/month) include commercial rights suitable for independent musicians monetizing content across standard platforms.

Q: What audio quality works best for accurate lip-sync with talking avatars?

A: Higher quality audio produces better lip-sync results. Upload WAV or FLAC files rather than compressed MP3s when possible. Ensure vocals are clearly defined in your mix—prominent, relatively dry lead vocals with minimal reverb provide the best phoneme recognition. A sample rate of 44.1kHz or higher and bit depth of 16-bit minimum are recommended. If synchronization seems poor, try uploading an isolated vocal track or a mix with vocals significantly louder than instrumental elements.

Q: Can I create a consistent avatar character across multiple music videos?

A: Yes, creating a consistent avatar persona across multiple releases is highly recommended for brand building. Most platforms allow you to save character customizations as templates for reuse. Document your avatar’s specific features, color schemes, and design elements to maintain consistency even when using different platforms. This visual continuity helps audiences recognize your content immediately and builds stronger connection with your artistic identity across your entire catalog.